L2: Processes, Threads and Synchronization

Processes & Threads

Process

A program in execution, identified by PID

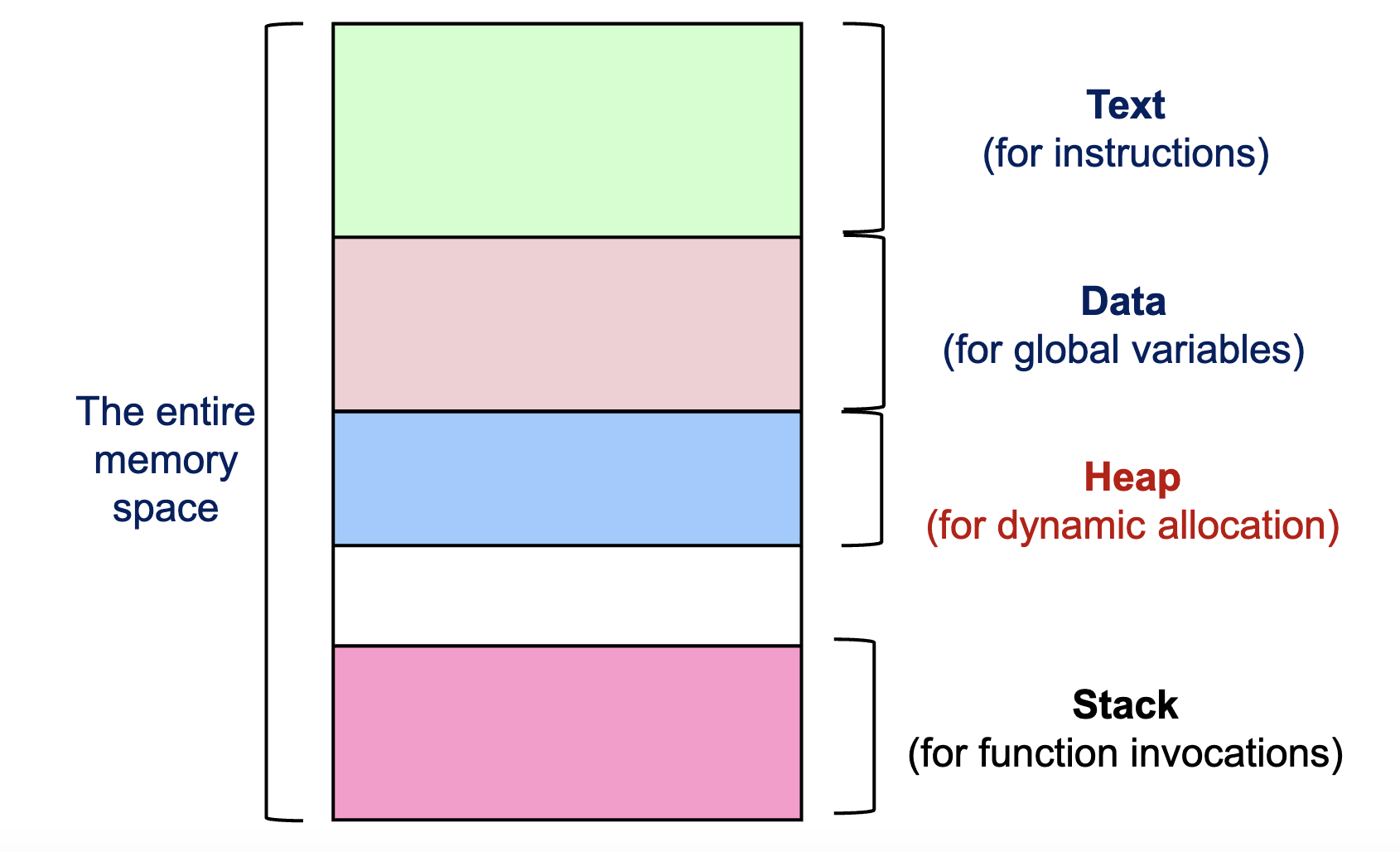

- Comprises: executable program (PC), global data (OS resources: open files, network connections), stack or heap, and current values of the registers (GPRs and Special)

- Own address space: exclusive access to its data

- Two or more processes exchange data → need explicit communication

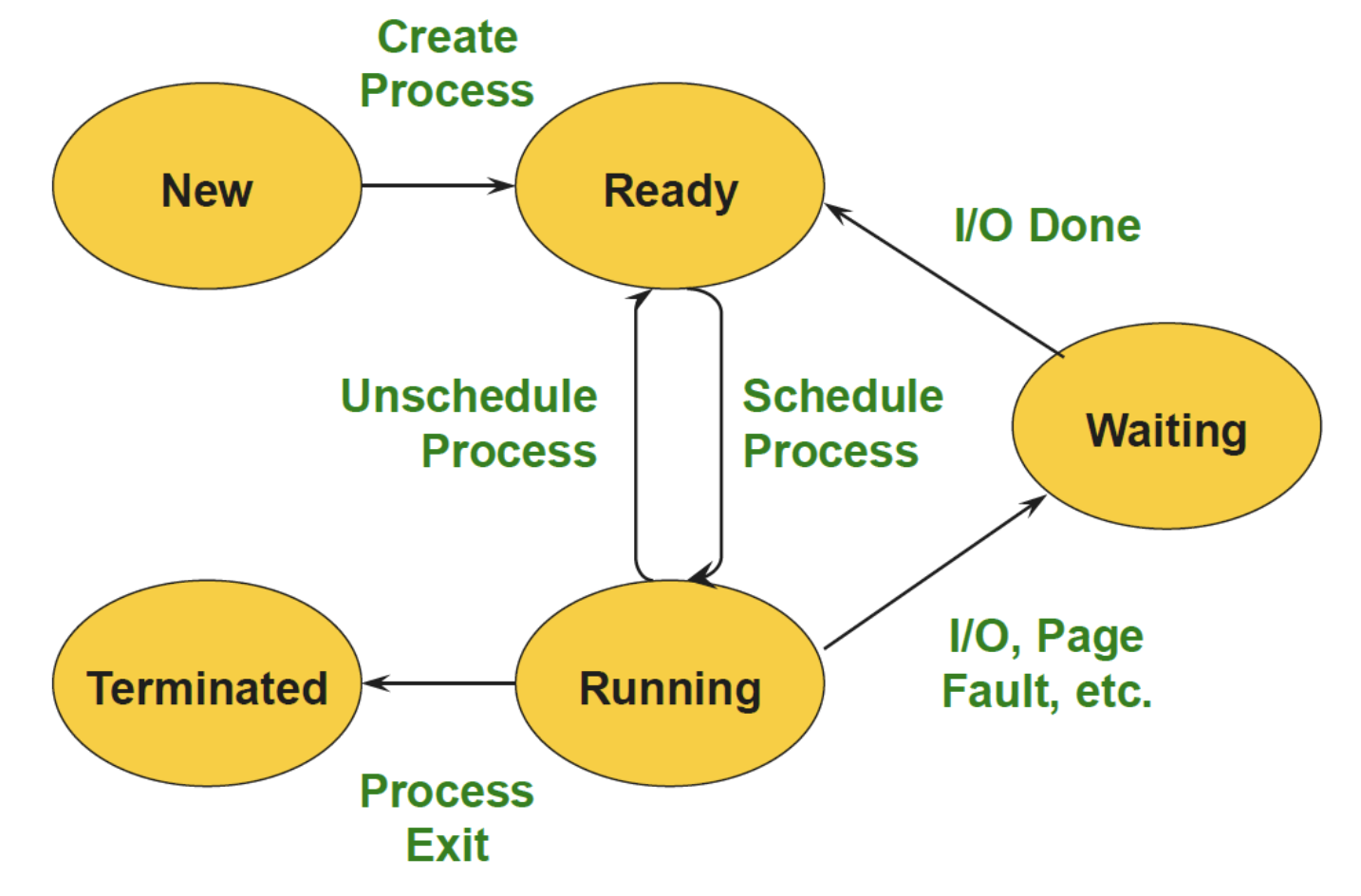

Multi-programming (Multitasking):

- Several processes at different stages of execution

- Need context switch

- States of the suspended process must be saved → overhead

- 2 types of execution:

- Time slicing execution → pseudo-parallelism

- Parallel execution of processes on different resources

IPC: Cooperating processes have to share information

- Shared memory → Need to protect access when reading/writing with locks

- Message passing: Blocking & non-blocking, Synchronous & asynchronous

- Unix specific: Pipes & Signal

Exceptions:

- Executing a machine level instruction can cause exception

- E.g: Overflow, Underflow, Division by Zero, Illegal memory address, Misaligned memory access

- Synchronous: Occur due to program execution

- Have to execute an exception handler

Interrupts:

- External events can interrupt the execution of a program

- Usually hardware related: Timer, Mouse Movement, Keyboard Pressed, etc

- Asynchronous: Occur independently of program execution

- Have to execute an interrupt handler

Disadvantages:

- Creating a new process is costly

- Overhead of system calls

- All data structures must be allocated, initialized and copied

- Communicating between processes costly → Go through the OS

Thread

- Extension of process model:

- A process may consist of multiple independent control flows called threads

- The thread defines a sequential execution stream within a process(PC, SP, registers)

- Threads share the address space of the process:

- All threads belonging to the same process see the same value

- shared-memory architecture

- Thread generation is faster than process generation: No copy of the address space is necessary

- Different threads of a process can be assigned run on different cores of a multicore processor

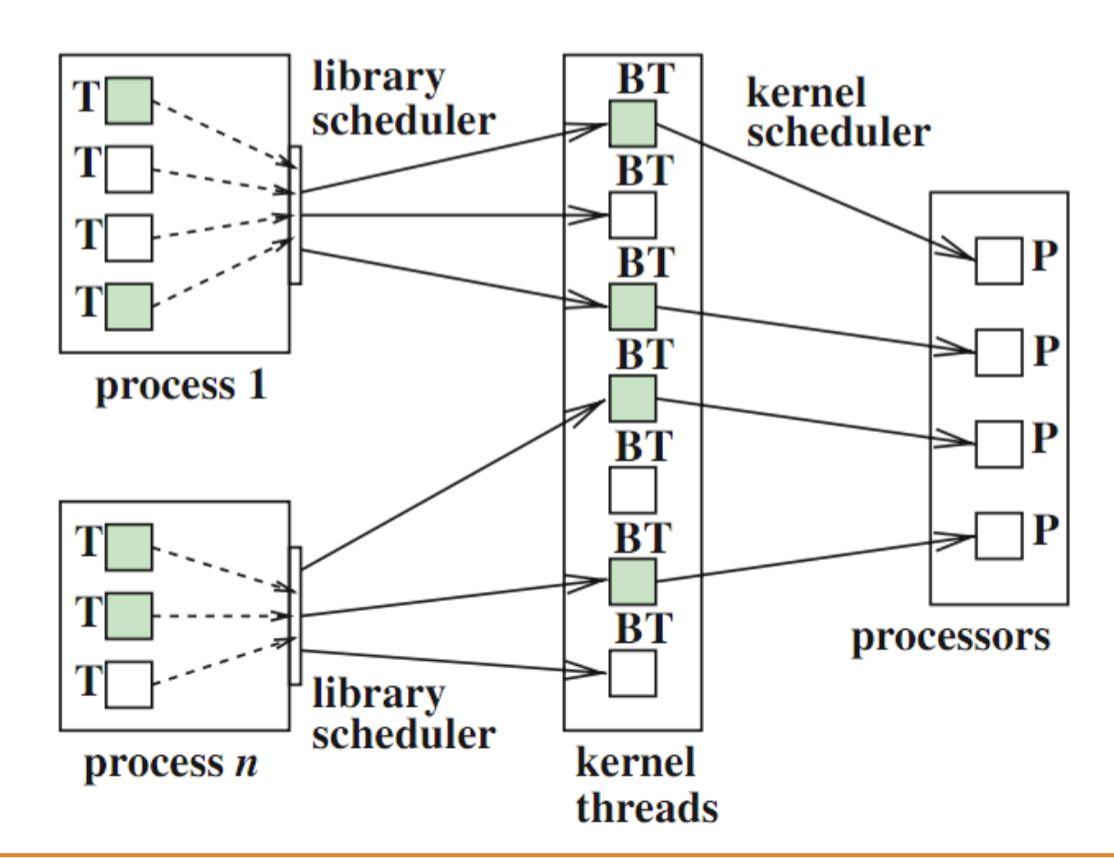

- 2 types: User-level threads, Kernel threads

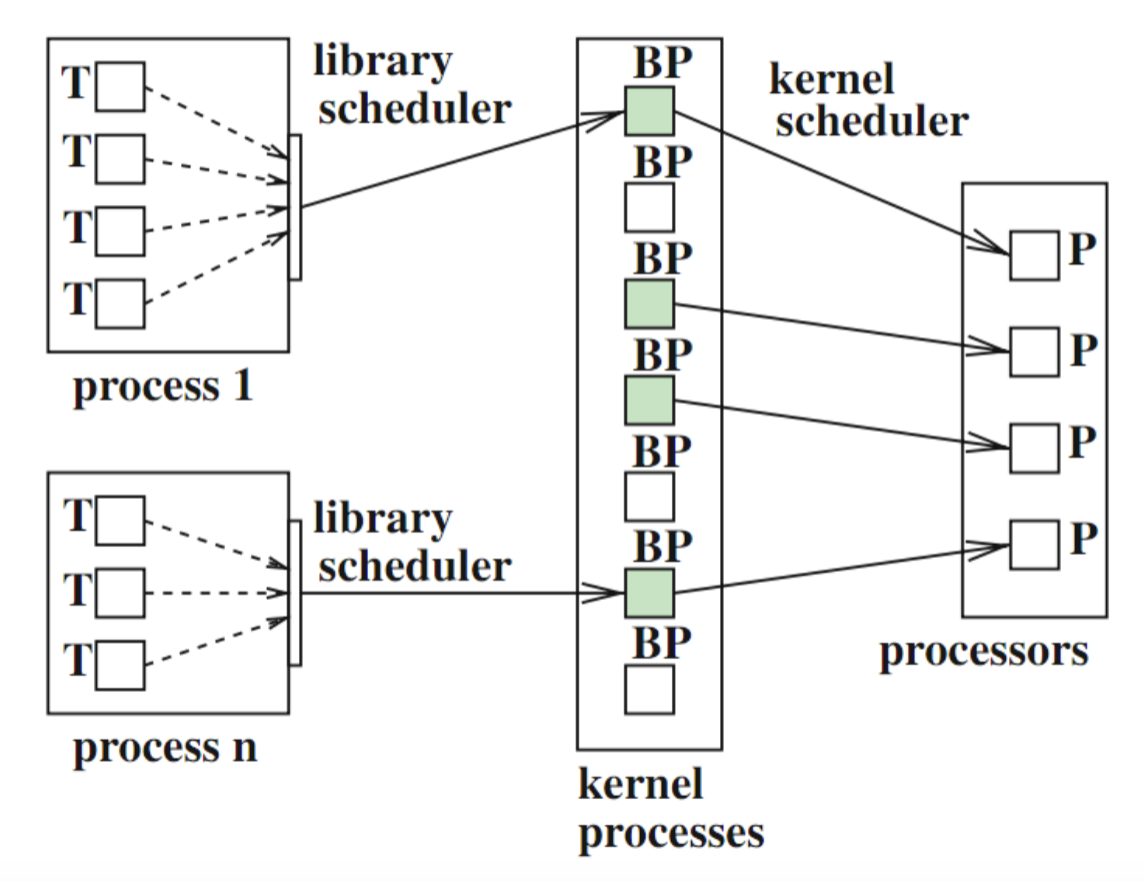

User-level threads:

- Managed by a thread library – OS unaware of user-level threads so no OS support

- Advantages: switching thread context is fast

- Disadvantages

- OS cannot map different threads of the same process to different execution resources → no parallelism

- OS cannot switch to another thread if one thread executes a blocking I/O operation

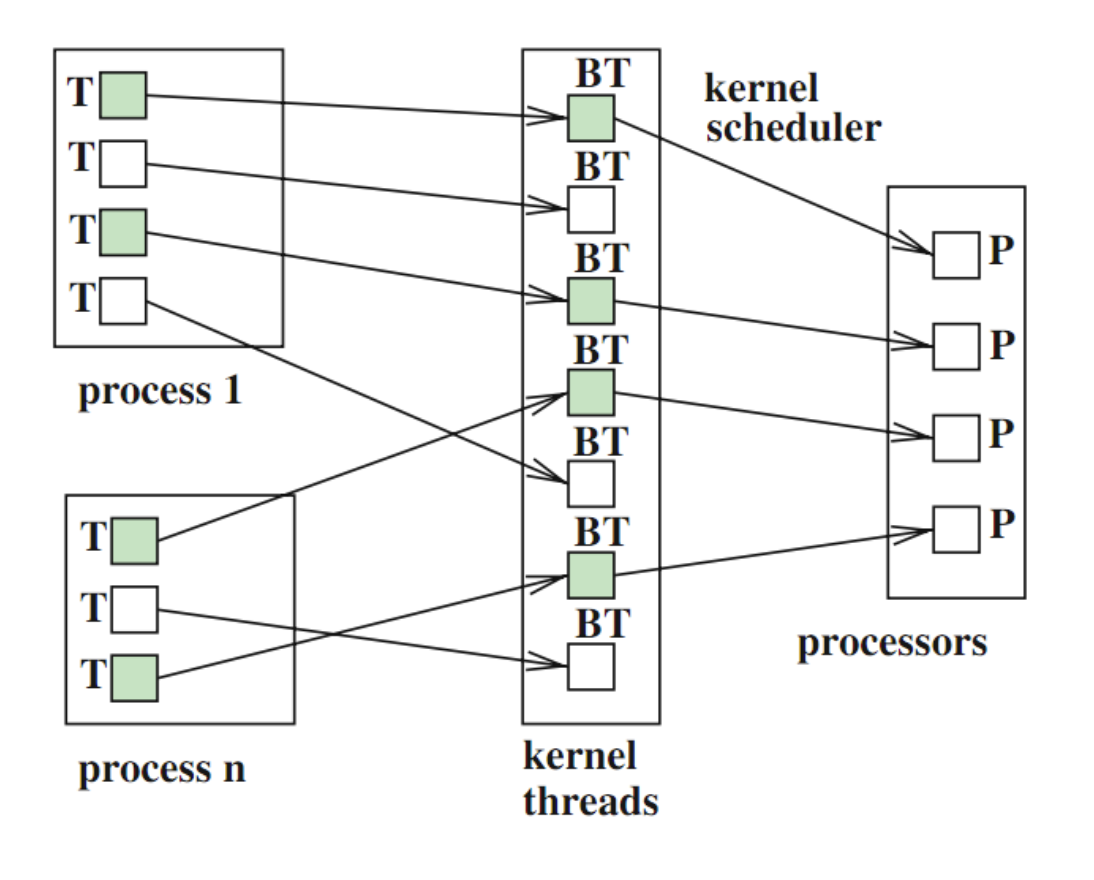

Kernel threads:

- OS is aware of the existence of threads and can react correspondingly

- Avoid disadvantages of user-level threads

- Efficient use of the cores in a multicore system

many-to-many mapping

Synchronization

Synchronization mechanisms

Lock:

acquire()andrelease()- Locks can spin (a spinlock) or block (a mutex)

Semaphores

Semaphore::Wait(): decrement (alsoP())Semaphore::Signal(): increment, (alsoV())- Semaphore value is always greater than or equal to 0

- Mutex semaphore (or binary semaphore): count = 1

- Counting semaphore (or general semaphore): count = N

Barrier:

Synchronization problems

Deadlock: exist if and only if the following four conditions hold simultaneously:

- Mutual exclusion – At least one resource must be held in a non-sharable mode

- Hold and wait – There must be one process holding one resource and waiting for another resource

- No pre-emption – Resources cannot be pre-empted (critical sections cannot be aborted externally)

- Circular wait – There must exist a set of processes [P1, P2, P3,…,Pn] such that P1 is waiting for P2, P2 for P3, etc

Starvation: side effect of the scheduling algorithm

- A high priority process always prevents a low priority process from running on the CPU

- One thread always beats another when acquiring a lock

Livelock:

Classic Synchronization Problems

Producer-consumer:

mutex = Semaphore(1);items = Semaphore(0)

1event = waitForEvent()2mutex.wait()3

4buffer.add(event)5

6items.signal()7mutex.signal()1items.wait()2mutex.wait()3

4event = buffer.get()5

6mutex.signal()7event.process()Producer-consumer with Finite Buffer:

1event = waitForEvent()2spaces.wait()3mutex.wait()4

5buffer.add ( event )6

7mutex.signal ()8items.signal ()1items.wait()2mutex.wait()3

4event = buffer.get()5

6mutex.signal()7spaces.signal()8event.process()Readers-writers Problem:

- Any number of readers can be in the critical section simultaneously

- Writers must have exclusive access to the critical section

int readers = 0,mutex = Semaphore (1),roomEmpty = Semaphore (1)

1roomEmpty.wait()2# critical section for writers3roomEmpty.signal()1mutex.wait ()2readers += 13if readers == 1:4 roomEmpty.wait () # first in locks5

6mutex.signal ()7# critical section for readers8mutex.wait ()9

10readers -= 111if readers == 0:12 roomEmpty.signal () # last out unlocks13mutex.signal ()